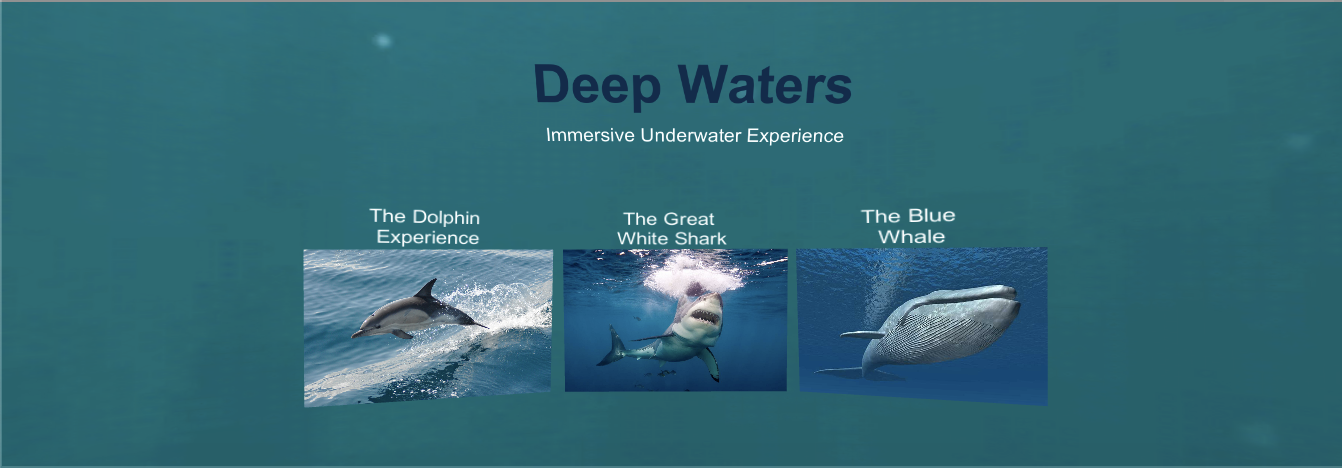

Deep Waters - VR Experience

Duration: December 2018 | Role: Interaction Designer

Purpose

This is a fun project that explores the possibilities of interaction design in VR. The objective was to understand the perception of the three-dimensional space and to design a user interface that corresponds with users’ motion and touch input.

The Virtual Reality technology granted me an opportunity to create an immersive environment to experience the underwater creatures in a 360 degree-video, delivered through an interactive interface.

I designed the experience utilizing the following modalities:

Visual - Motion, Animation, Visual cues/feedback, Angles & placement of the object for accurate perception (X,Y,Z axes).

Audio - Audio cues/feedback, Ambient audio.

Haptic - Touch input feedback.

Constraints

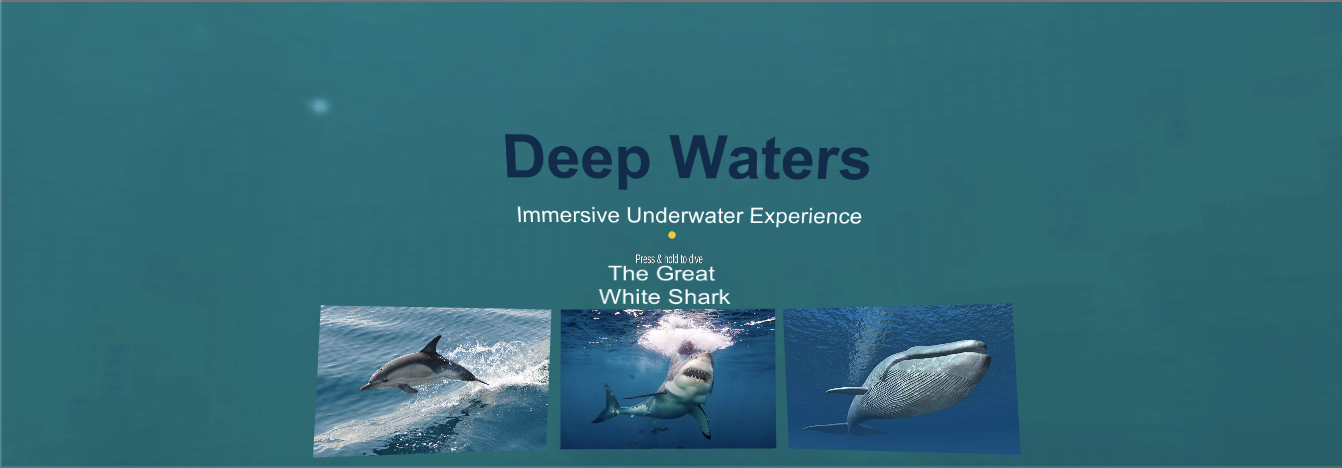

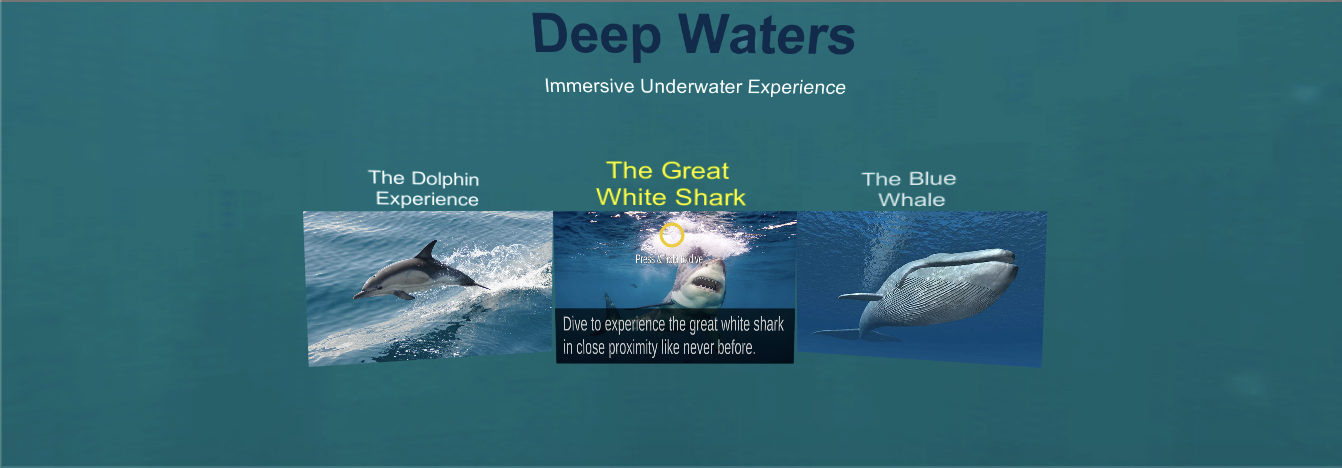

Navigation method: Head motion (X,Y axes).

Input method: A click.

Understanding VR guidelines & sketching user interface

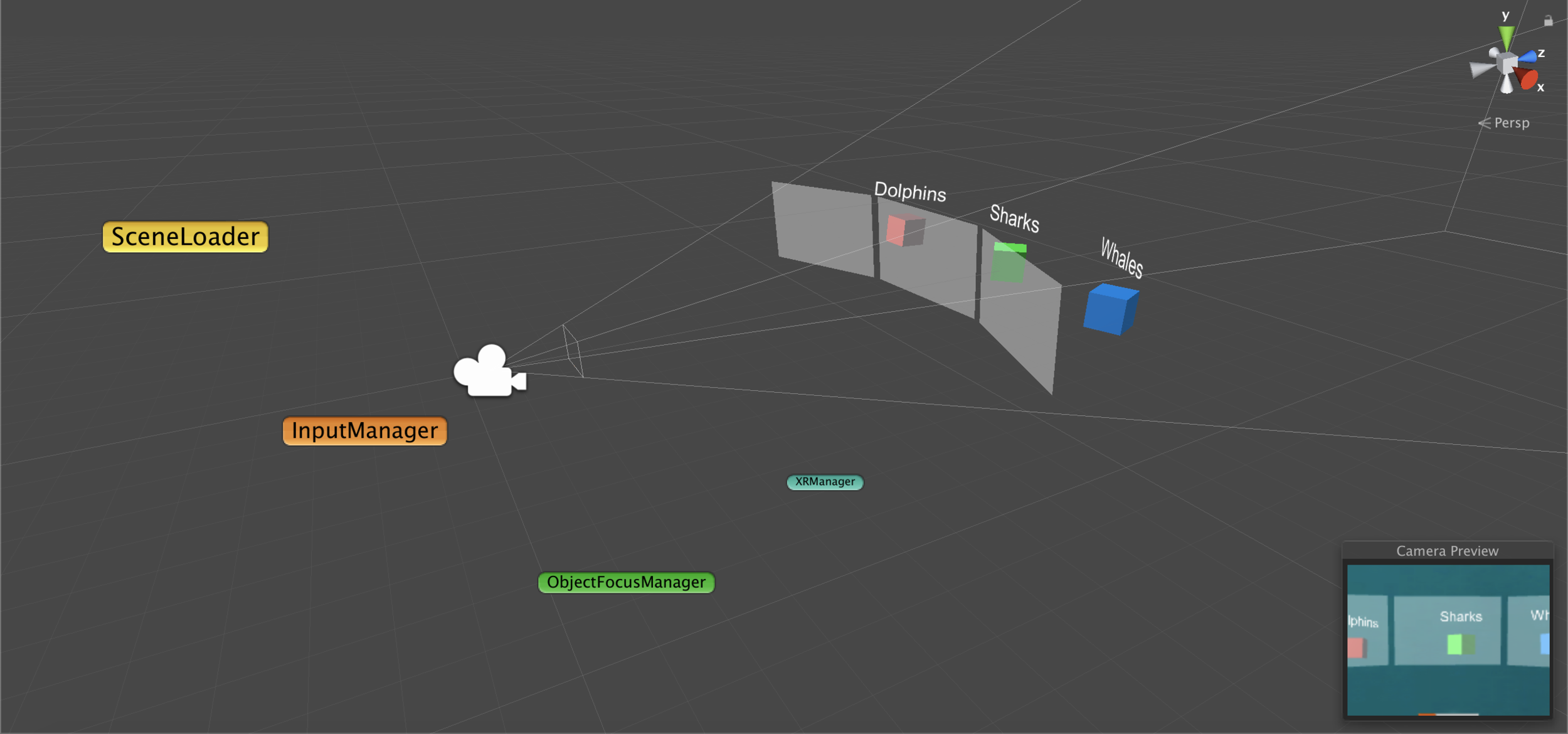

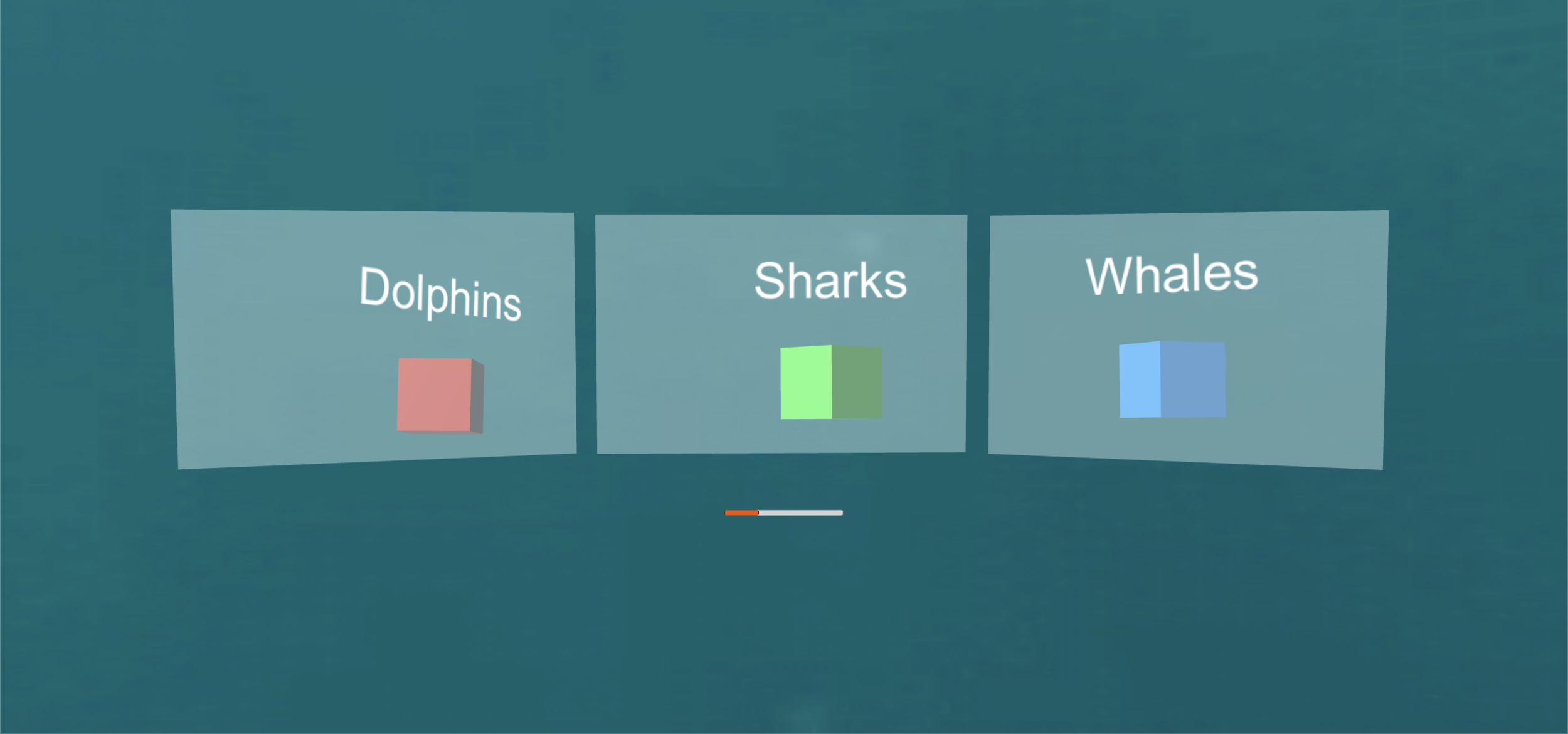

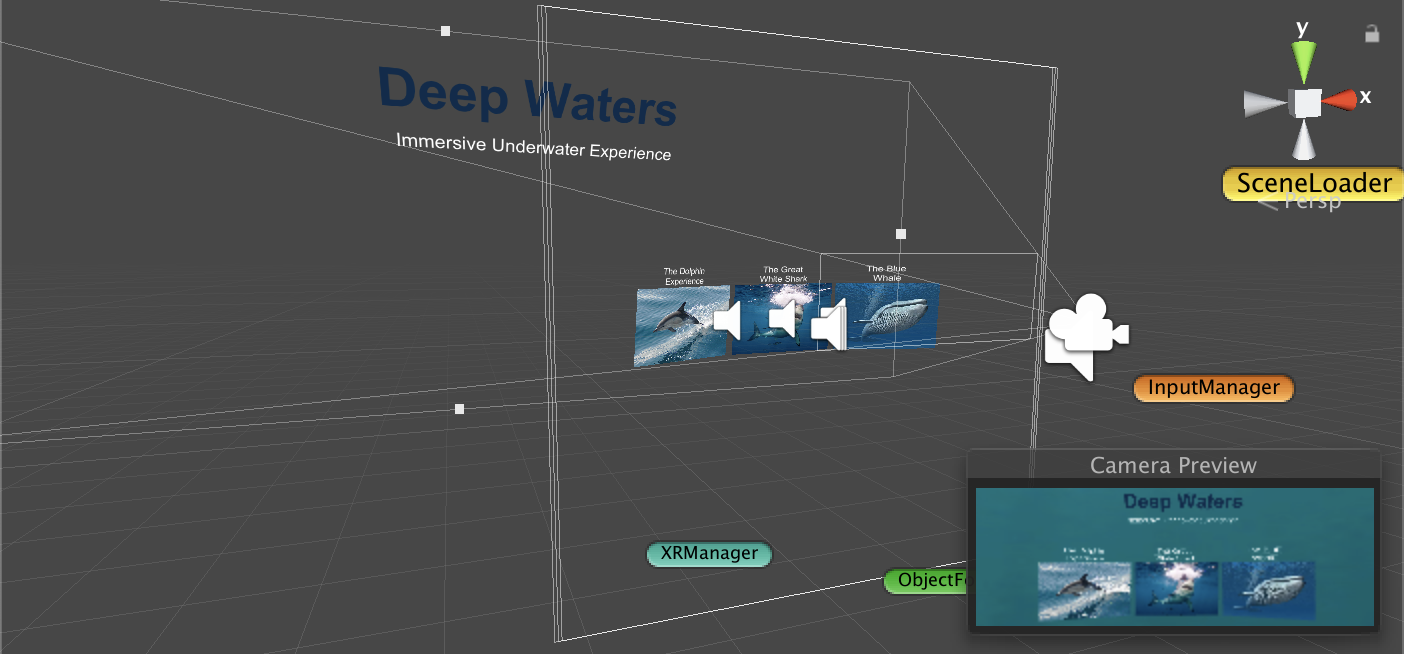

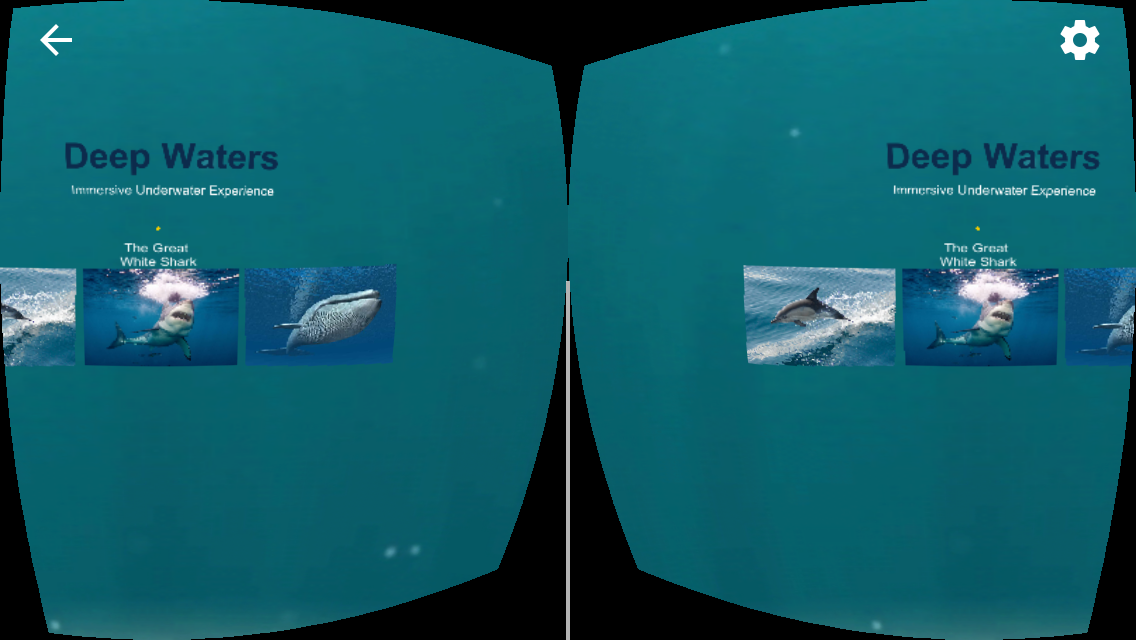

Prototyping

The above image gallery provides an overview of the design & build process from start to end.

Note: The rendering of the interface elements in the images aren’t sharp because of the difference in the perception from the stereoscopic rendering using the Google Cardboard vs the perception from the camera preview in Unity Editor. Google Cardboard lenses magnifies the interface elements and shifts the placement of the objects a step closer to your vision.

AUDIO

Sequential interaction-based audio cues for loading a 360 degree-video from the dashboard screen:

ANIMATION

Outcome

Note: The haptic feedback (phone vibration) has been indicated by “Haptic feedback” label under iPhone screen recording, which appears during touch input.

Technology Required

Unity - video game engine, C# scripting, Google VR SDK for Unity, Sketch, Google Cardboard.

Project Repository

https://github.com/abhinitparelkar/deepwaters.git

Lessons Learned

This project helped me to gain a perspective in designing & prototyping for a three-dimensional space.